|

5/26/2023 0 Comments Dim3 in cuda

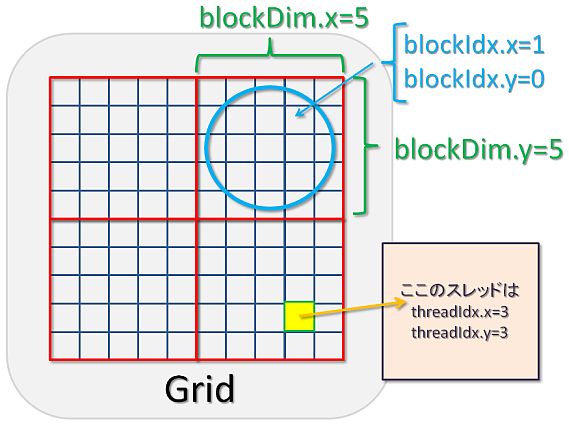

Three-dimensional indexing provides a natural way to index elements in vectors, matrix, and volume and makes CUDA programming easier. Threads are indexed using the built-in 3D variable threadIdx. Figure 3 shows the kernel execution and mapping on hardware resources available in GPU.ĬUDA defines built-in 3D variables for threads and blocks. Each kernel is executed on one device and CUDA supports running multiple kernels on a device at one time. One SM can run several concurrent CUDA blocks depending on the resources needed by CUDA blocks. A kernel is executed as a grid of blocks of threads (Figure 2).Įach CUDA block is executed by one streaming multiprocessor (SM) and cannot be migrated to other SMs in GPU (except during preemption, debugging, or CUDA dynamic parallelism).

CUDA kernels are subdivided into blocks.Ī group of threads is called a CUDA block. Programmers provide a unique global ID to each thread by using built-in variables.įigure 2. The kernel is a function executed on the GPU.Įvery CUDA kernel starts with a _global_ declaration specifier. The parallel portion of your applications is executed K times in parallel by K different CUDA threads, as opposed to only one time like regular C/C++ functions.įigure 1. Copy the results from device memory to host memory, also called device-to-host transfer.įigure 1 shows that the CUDA kernel is a function that gets executed on GPU.Load the GPU program and execute, caching data on-chip for performance.Copy the input data from host memory to device memory, also known as host-to-device transfer.To execute any CUDA program, there are three main steps: The GPU is called a device and GPU memory likewise called device memory. The system memory associated with the CPU is called host memory. The host is the CPU available in the system. Let me introduce two keywords widely used in CUDA programming model: host and device. This post outlines the main concepts of the CUDA programming model by outlining how they are exposed in general-purpose programming languages like C/C++. The CUDA programming model provides an abstraction of GPU architecture that acts as a bridge between an application and its possible implementation on GPU hardware. This is the fourth post in the CUDA Refresher series, which has the goal of refreshing key concepts in CUDA, tools, and optimization for beginning or intermediate developers. It is reprinted here with the permission of NVIDIA.

This blog post was originally published at NVIDIA’s website.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed